People excel at processing huge arrays of visible info, a ability that’s essential for attaining synthetic basic intelligence (AGI). Over the a long time, AI researchers have developed Visible Query Answering (VQA) programs to interpret scenes inside single photographs and reply associated questions. Whereas current developments in basis fashions have considerably closed the hole between human and machine visible processing, standard VQA has been restricted to purpose about solely single photographs at a time somewhat than complete collections of visible information.

This limitation poses challenges in additional complicated situations. Take, for instance, the challenges of discerning patterns in collections of medical photographs, monitoring deforestation by way of satellite tv for pc imagery, mapping city modifications utilizing autonomous navigation information, analyzing thematic components throughout giant artwork collections, or understanding shopper conduct from retail surveillance footage. Every of those situations entails not solely visible processing throughout lots of or hundreds of photographs but additionally necessitates cross-image processing of those findings. To deal with this hole, this undertaking focuses on the “Multi-Picture Query Answering” (MIQA) activity, which exceeds the attain of conventional VQA programs.

![]()

Visible Haystacks: the primary “visual-centric” Needle-In-A-Haystack (NIAH) benchmark designed to carefully consider Massive Multimodal Fashions (LMMs) in processing long-context visible info.

Methods to Benchmark VQA Fashions on MIQA?

The “Needle-In-A-Haystack” (NIAH) problem has just lately change into one of the vital common paradigms for benchmarking LLM’s capacity to course of inputs containing “lengthy contexts”, giant units of enter information (similar to lengthy paperwork, movies, or lots of of photographs). On this activity, important info (“the needle”), which accommodates the reply to a particular query, is embedded inside an enormous quantity of information (“the haystack”). The system should then retrieve the related info and reply the query accurately.

The primary NIAH benchmark for visible reasoning was launched by Google within the Gemini-v1.5 technical report. On this report, they requested their fashions to retrieve textual content overlaid on a single body in a big video. It seems that current fashions carry out fairly effectively on this activity—primarily as a consequence of their sturdy OCR retrieval capabilities. However what if we ask extra visible questions? Do fashions nonetheless carry out as effectively?

What’s the Visible Haystacks (VHs) Benchmark?

In pursuit of evaluating “visual-centric” long-context reasoning capabilities, we introduce the “Visible Haystacks (VHs)” benchmark. This new benchmark is designed to evaluate Massive Multimodal Fashions (LMMs) in visible retrieval and reasoning throughout giant uncorrelated picture units. VHs options roughly 1K binary question-answer pairs, with every set containing wherever from 1 to 10K photographs. In contrast to earlier benchmarks that targeted on textual retrieval and reasoning, VHs questions heart on figuring out the presence of particular visible content material, similar to objects, using photographs and annotations from the COCO dataset.

The VHs benchmark is split into two important challenges, every designed to check the mannequin’s capacity to precisely find and analyze related photographs earlier than responding to queries. We’ve fastidiously designed the dataset to make sure that guessing or counting on frequent sense reasoning with out viewing the picture gained’t get any benefits (i.e., leading to a 50% accuracy price on a binary QA activity).

Single-Needle Problem: Solely a single needle picture exists within the haystack of photographs. The query is framed as, “For the picture with the anchor object, is there a goal object?”

Multi-Needle Problem: Two to 5 needle photographs exist within the haystack of photographs. The query is framed as both, “For all photographs with the anchor object, do all of them include the goal object?” or “For all photographs with the anchor object, do any of them include the goal object?”

Three Necessary Findings from VHs

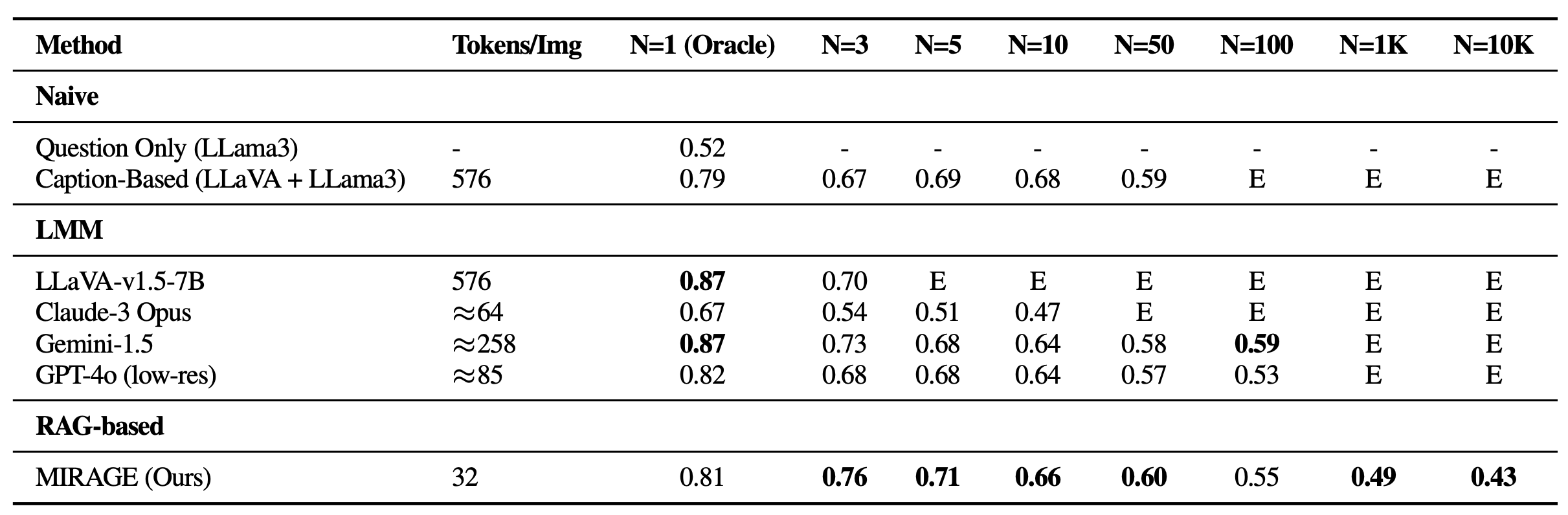

The Visible Haystacks (VHs) benchmark reveals important challenges confronted by present Massive Multimodal Fashions (LMMs) when processing in depth visible inputs. In our experiments throughout each single and multi-needle modes, we evaluated a number of open-source and proprietary strategies together with LLaVA-v1.5, GPT-4o, Claude-3 Opus, and Gemini-v1.5-pro. Moreover, we embrace a “Captioning” baseline, using a two-stage strategy the place photographs are initially captioned utilizing LLaVA, adopted by answering the query utilizing the captions’ textual content content material with Llama3. Under are three pivotal insights:

Struggles with Visible Distractors

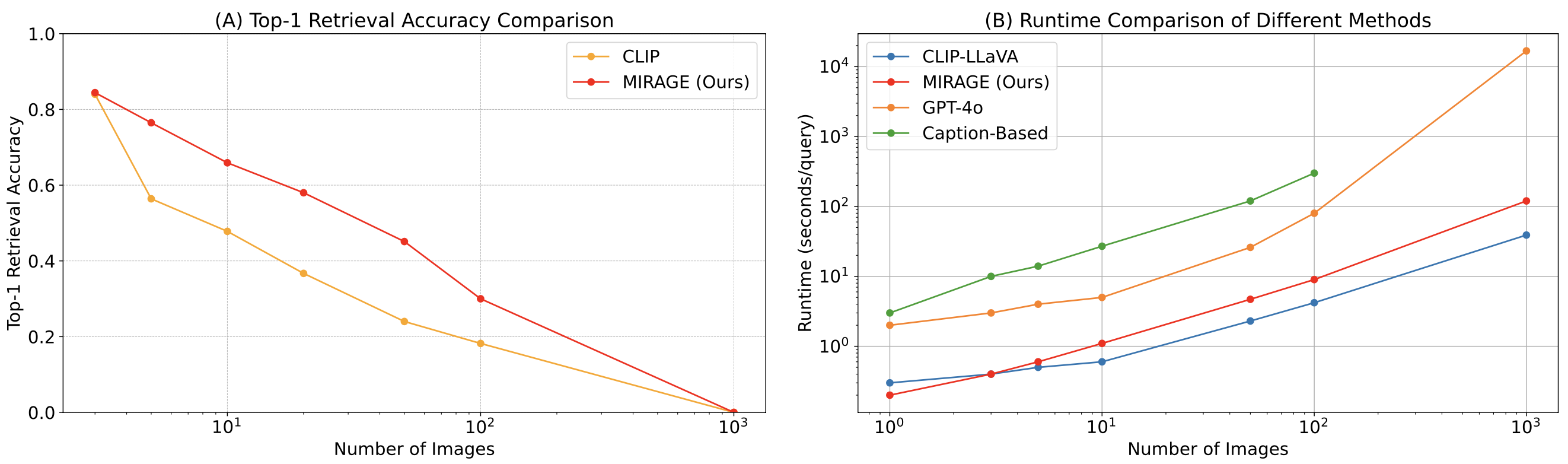

In single-needle settings, a notable decline in efficiency was noticed because the variety of photographs elevated, regardless of sustaining excessive oracle accuracy—a state of affairs absent in prior text-based Gemini-style benchmarks. This reveals that current fashions might primarily battle with visible retrieval, particularly within the presence of difficult visible distractors. Moreover, it’s essential to focus on the constraints on open-source LMMs like LLaVA, which may deal with solely as much as three photographs as a consequence of a 2K context size restrict. Alternatively, proprietary fashions similar to Gemini-v1.5 and GPT-4o, regardless of their claims of prolonged context capabilities, typically fail to handle requests when the picture rely exceeds 1K, as a consequence of payload measurement limits when utilizing the API name.

Efficiency on VHs for single-needle questions. All fashions expertise important falloff as the scale of the haystack (N) will increase, suggesting none of them are sturdy towards visible distractors. E: Exceeds context size.Issue Reasoning Throughout A number of Pictures

Apparently, all LMM-based strategies confirmed weak efficiency with 5+ photographs in single-image QA and all multi-needle settings in comparison with a fundamental strategy chaining a captioning mannequin (LLaVA) with an LLM aggregator (Llama3). This discrepancy means that whereas LLMs are able to integrating long-context captions successfully, current LMM-based options are insufficient for processing and integrating info throughout a number of photographs. Notably, the efficiency massively deteriorates in multi-image situations, with Claude-3 Opus displaying weak outcomes with solely oracle photographs, and Gemini-1.5/GPT-4o dropping to 50% accuracy (identical to a random guess) with bigger units of fifty photographs.

Outcomes on VHs for multi-needle questions. All visually-aware fashions carry out poorly, indicating that fashions discover it difficult to implicitly combine visible info.Phenomena in Visible Area

Lastly, we discovered that the accuracy of LMMs is massively affected by the place of the needle picture inside the enter sequence. As an example, LLaVA reveals higher efficiency when the needle picture is positioned instantly earlier than the query, struggling as much as a 26.5% drop in any other case. In distinction, proprietary fashions typically carry out higher when the picture is positioned in the beginning, experiencing as much as a 28.5% lower when not. This sample echoes the “lost-in-the-middle” phenomenon seen within the area of Pure Language Processing (NLP), the place essential info positioned at first or finish of the context influences mannequin efficiency. This concern was not evident in earlier Gemini-style NIAH analysis, which solely required textual content retrieval and reasoning, underscoring the distinctive challenges posed by our VHs benchmark.

Needle place vs. efficiency on VHs for varied picture settings. Current LMMs present as much as 41% efficiency drop when the needle just isn’t ideally positioned. Grey containers: Exceeds context size.

MIRAGE: A RAG-based Resolution for Improved VHs Efficiency

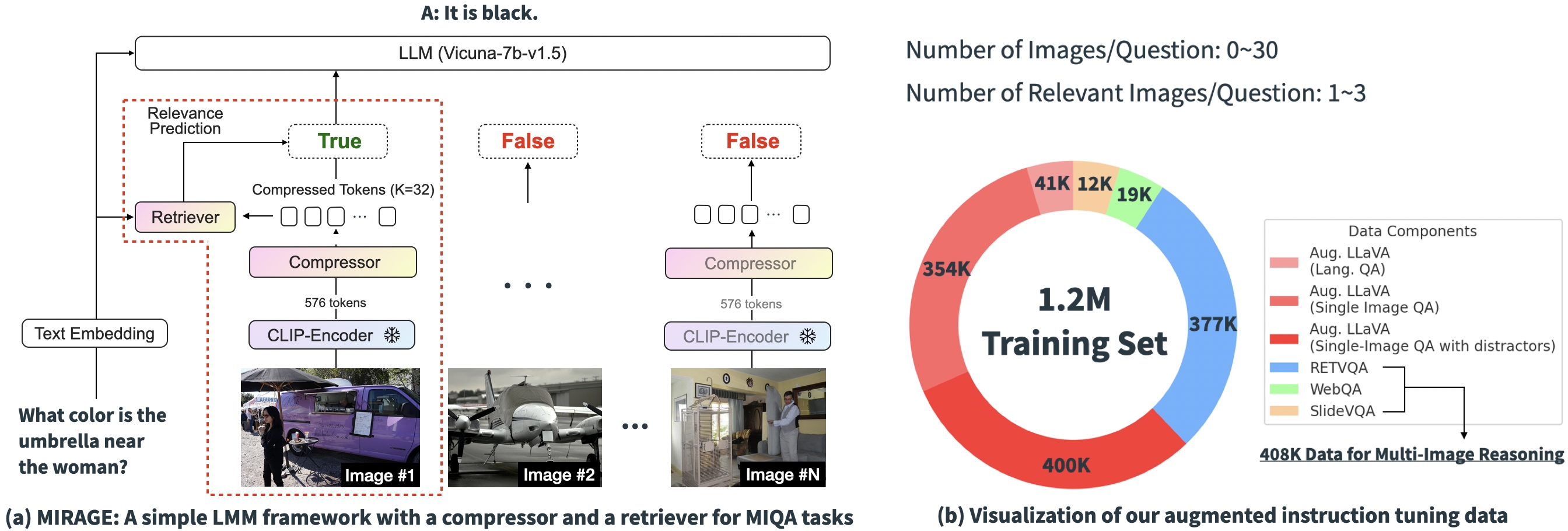

Based mostly on the experimental outcomes above, it’s clear that the core challenges of current options in MIQA lie within the capacity to (1) precisely retrieve related photographs from an enormous pool of doubtless unrelated photographs with out positional biases and (2) combine related visible info from these photographs to accurately reply the query. To deal with these points, we introduce an open-source and easy single-stage coaching paradigm, “MIRAGE” (Multi-Picture Retrieval Augmented Era), which extends the LLaVA mannequin to deal with MIQA duties. The picture under reveals our mannequin structure.

Our proposed paradigm consists of a number of elements, every designed to alleviate key points within the MIQA activity:

Compress current encodings: The MIRAGE paradigm leverages a query-aware compression mannequin to scale back the visible encoder tokens to a smaller subset (10x smaller), permitting for extra photographs in the identical context size.

Make use of retriever to filter out irrelevant message: MIRAGE makes use of a retriever skilled in-line with the LLM fine-tuning, to foretell if a picture will probably be related, and dynamically drop irrelevant photographs.

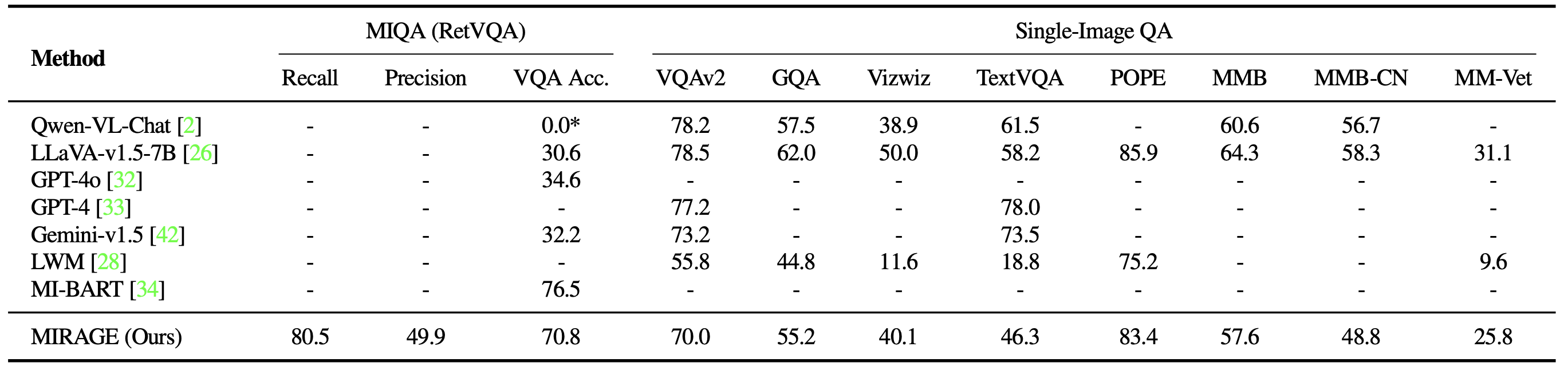

Multi-Picture Coaching Knowledge: MIRAGE augments current single-image instruction fine-tuning information with multi-image reasoning information, and artificial multi-image reasoning information.

Outcomes

We revisit the VHs benchmark with MIRAGE. Along with being able to dealing with 1K or 10K photographs, MIRAGE achieves state-of-the-art efficiency on most single-needle duties, regardless of having a weaker single-image QA spine with solely 32 tokens per picture!

We additionally benchmark MIRAGE and different LMM-based fashions on quite a lot of VQA duties. On multi-image duties, MIRAGE demonstrates sturdy recall and precision capabilities, considerably outperforming sturdy opponents like GPT-4, Gemini-v1.5, and the Massive World Mannequin (LWM). Moreover, it reveals aggressive single-image QA efficiency.

Lastly, we evaluate MIRAGE’s co-trained retriever with CLIP. Our retriever performs considerably higher than CLIP with out shedding effectivity. This reveals that whereas CLIP fashions could be good retrievers for open-vocabulary picture retrieval, they might not work effectively when coping with question-like texts!

On this work, we develop the Visible Haystacks (VHs) benchmark and recognized three prevalent deficiencies in current Massive Multimodal Fashions (LMMs):

Struggles with Visible Distractors: In single-needle duties, LMMs exhibit a pointy efficiency decline because the variety of photographs will increase, indicating a major problem in filtering out irrelevant visible info.

Issue Reasoning Throughout A number of Pictures: In multi-needle settings, simplistic approaches like captioning adopted by language-based QA outperform all current LMMs, highlighting LMMs’ insufficient capacity to course of info throughout a number of photographs.

Phenomena in Visible Area: Each proprietary and open-source fashions show sensitivity to the place of the needle info inside picture sequences, exhibiting a “loss-in-the-middle” phenomenon within the visible area.

In response, we suggest MIRAGE, a pioneering visible Retriever-Augmented Generator (visual-RAG) framework. MIRAGE addresses these challenges with an revolutionary visible token compressor, a co-trained retriever, and augmented multi-image instruction tuning information.

After exploring this weblog put up, we encourage all future LMM tasks to benchmark their fashions utilizing the Visible Haystacks framework to establish and rectify potential deficiencies earlier than deployment. We additionally urge the neighborhood to discover multi-image query answering as a method to advance the frontiers of true Synthetic Basic Intelligence (AGI).

Final however not least, please take a look at our undertaking web page, and arxiv paper, and click on the star button in our github repo!

@article{wu2024visual,

title={Visible Haystacks: Answering Tougher Questions About Units of Pictures},

writer={Wu, Tsung-Han and Biamby, Giscard and and Quenum, Jerome and Gupta, Ritwik and Gonzalez, Joseph E and Darrell, Trevor and Chan, David M},

journal={arXiv preprint arXiv:2407.13766},

12 months={2024}

}